Cloud Controller

Page last updated:

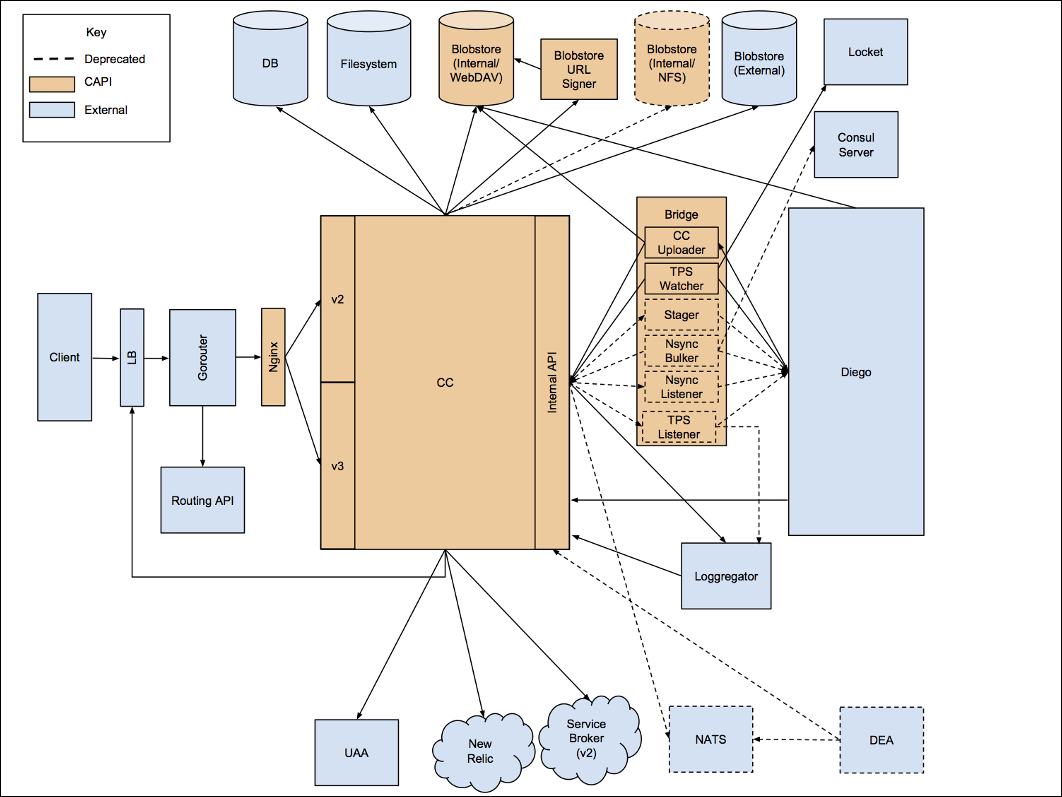

Cloud Controller in Cloud Foundry provides you with REST API endpoints to access the system. Cloud Controller maintains a database with tables for orgs, spaces, services, user roles, and more.

Refer to the following diagram for information about internal and external communications of the Cloud Controller.

View a larger version of this image.

Diego Auction

The Cloud Controller uses the Diego Auction to balance application processes over the Diego Cells in a Cloud Foundry installation.

Database (CC_DB)

The Cloud Controller database has been tested with Postgres and MySQL.

Blobstore

To stage and run apps, Cloud Foundry manages and stores app source code and other files in an S3-compatible blobstore.

Please see Cloud Controller blobstore for information.

Testing

By default rspec runs a test suite with the SQLite in-memory database.

Specify a connection string using the DB_CONNECTION environment variable to

test against Postgres and MySQL. For example:

DB_CONNECTION="postgres://postgres@localhost:5432/ccng" rspec

DB_CONNECTION="mysql2://root:password@localhost:3306/ccng" rspec

Travis currently runs two build jobs against Postgres and MySQL.

For more information about how Cloud Foundry aggregates and streams logs and metrics, see Overview of Logging and Metrics.

Create a pull request or raise an issue on the source for this page in GitHub